Machine Learning News - Week 0

Concise digested post on new things in the ML world from the last week

Are you struggling to keep up with so many things happening in the ML world? Do you want to know what was new this week? This is for you! I’m experimenting with creating concise content with news of things happening in the ecosystem - to the point you can get a high-level picture in under 10 minutes. If you have any feedback, send me a message on Twitter. Let’s go! 🔥

Stable Diffusion

We’re a month and a half since the launch of Stable Diffusion, and every week is full of exciting stuff!

Demos: Lnyan released a free, open-source demo of outpainting with an infinite canvas. Waifu Diffusion (SD fine-tuned on 56K anime images) also got a nice new demo. The Japanese Stable Diffusion wrote a blog post about their experience fine-tuning SD on Japanese-captioned images (demo). Nate Raw also implemented Stable Diffusion music videos, which allows using the audio to inform the rate of interpolation, so it generates videos that move to the beat.

Research: Apart from nice demos, there are many other exciting updates. Victor Gallego proposed Aesthetic Gradients (paper, explanation), which should allow generating your styles without further training (as with Textual Inversion or DreamBooth). Two weeks ago, DreamFusion, a text-to-3D model, was announced. The community implemented an open-source version using Stable Diffusion (code) in under a week. The original paper used Imagen, which is not publicly available, so the authors used Stable Diffusion to power it up.

Tooling: There have also been interesting tooling improvements. Nouamane from HF added multiple improvements to allow a speedup of x2.96 to generate a single image in 3.21 seconds! The Hugging Face diffusers library did a new release with new features such as negative prompts and the speedups mentioned above.

Diffusion Models are everywhere!

We’re seeing diffusion models being applied to all kinds of tasks. Let’s do a super quick recap:

Google announced 3DiM, a diffusion model to generate 3D view synthesis from a few images, or even one! (paper, website)

MotionDiffuse, a model to generate human motion based on an input text, was just open-sourced. (code, paper, website)

Folding Diffusion by Kevin Wu, Kevin K. Yang, and others for protein structure generation (code, paper, demo)

TabDDPM is the first exploration I’ve seen to model tabular data using diffusion models. (code, paper)

A paper proposed an unsupervised image translation method that allows high-quality transfer using images and text to condition. (code, paper)

In robotics, SE(3)-DiffusionFields use diffusion models to represent 6D grasp models. (code, paper, website)

Google announced Imagen Video, a model that generates high-quality videos conditioned on a text (video, paper)

Following the same line, Phenaki, another video generation model from Google, was released. This was particularly exciting because the prompts can also change over time, and the videos can be long, not just a few seconds (paper, website)

So many fields exploring diffusion models! This amazing repository compiles resources and papers on Diffusion models that you can check out!

Not Diffusion

There are many things outside diffusion models as well!

In the Natural Language Processing field,

CarperAI released TRLX, a tool to train large language models using Reinforcement Learning. The library allows anyone to fine-tune models such as GPT-2 and GPT-J.

Self-ask is a new way to prompt language models to improve their ability to solve complex questions. The idea is that the model can generate subquestions and learns how to answer the main question based on those answers.

CarperAI (again?) did a preliminary release of FIM, a large infilling autoregressive model

In the audio field,

SpeechCLIP was announced, integrating speech with pre-trained vision and language models (code, paper, website). The model has some interesting properties, such as SOTA image-speech and zero-shot speech-text retrieval.

Google released AudioML to generate consistent and high-fidelity synthetic audio (blog).

In the robotics field,

The Pre-Grasp informed Dexterous Manipulation (PGDM) pipeline is a simple, but powerful idea for robots to learn a variety of dexterous tasks. The authors also released a large benchmark for the task, and this method works without hyperparameter tuning!

VIMA proposes multimodal prompts to do general robot manipulation (code, paper, website)

Apart from models, there are also cool new datasets.

The bloom library is a multimodal dataset in over 300 languages for different tasks (website).

DR.BENCH is a dataset to evaluate clinical NLP models.

ImDrug is a new benchmark for 54 tasks of 11 datasets that are imbalanced for drug discovery.

On the evaluation side,

A new metric, Vendi Score, proposes an interpretable, cross-domain way to measure diversity.

A paper from Hugging Face on best practices for scalable data and model measurements

A bit of everything

DeepMind announced AlphaTensor, a way to use ML to discover algorithms to multiply matrices!

Meta released AITemplate (AIT?), a new inference engine with super fast inference.

Nerfstudio, a plug-and-play super cool Python library, makes it super easy to create your own NeRFs!

Activeloop released Deep Lake, a new product to store data of different modalities with visualizations and fast streaming.

VToonify by Shuai Yang allows portrait video style transfer, and it’s a lot of fun to try out!

This paper presents a way to estimate the scaling law parameters based on learning curves. They do what the paper name says: they revisit the scaling laws in language and vision models.

DeepMind

Learning

Jay Alammar has a new amazing Illustrated Stable Diffusion. Jay’s guides are famous for amazing visuals and clear explanations, so they are definitely worth checking out!

If you’re interested in Graph Neural Networks and Graph Transformers, this Survey should be a fun read!

Explosion (the creators of SpaCy) wrote a nice blog post on end-to-end coreference resolution.

This Monday, there is a virtual talk at Columbia on protein language models.

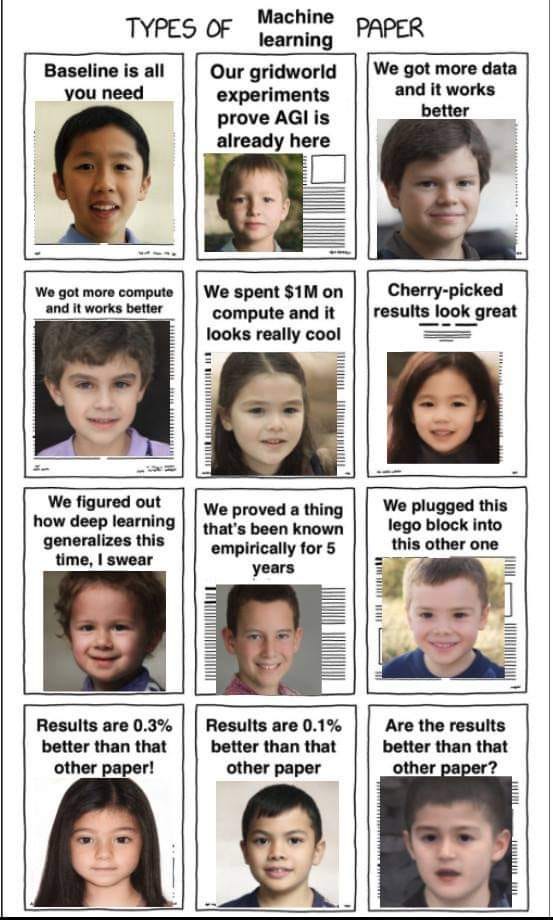

Meme of the week

Source: Reddit